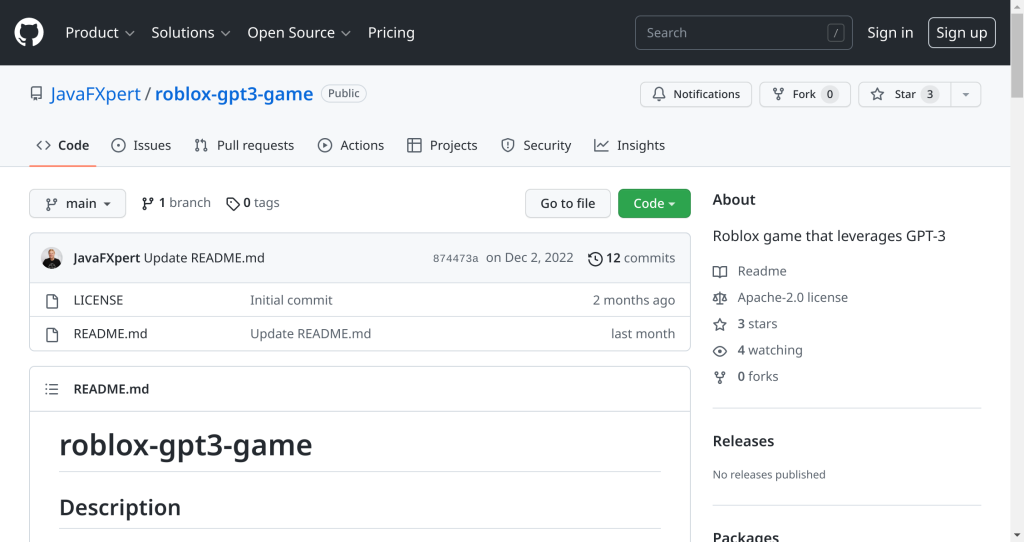

Microsoft and NVIDIA have created the Megatron-Turing Natural Language Generation model (MT-NLG), a state-of-the-art language model with 530 billion parameters. This monolithic transformer language model is three times larger than any other of its kind, and can perform natural language tasks with high accuracy including prediction, reading comprehension, common sense reasoning, natural language reasoning and word meaning disambiguation. To train this impressive model, Microsoft utilized Selene supercomputer which consists of 560 Nvidia DGX A100 servers interconnected via HDR InfiniBand full fat tree extension. Each server includes 8 GPUs connected using NVLink & NVSwitch plus 80GB Tensor Core GPU. The training also included mixed precision techniques to achieve maximum performance while maintaining accuracy in results.